Sometime in the past eighteen months, a line was quietly crossed. AI crawlers – the automated agents that scrape the web to feed training datasets and power real-time AI search products – went from a minor line item in server logs to a genuine operational problem. In March 2025, Cloudflare reported that AI crawlers were generating more than 50 billion requests per day across its network – just under 1% of all web traffic it processes. Between May 2024 and May 2025, overall AI crawler request volume on Cloudflare’s network grew by 18%.

For hosting companies, this is not an abstract copyright debate. It is a resource consumption problem. AI crawlers generate load – CPU cycles, bandwidth, I/O – that hosting providers pay for but nobody pays them for. On shared hosting, a single site being aggressively crawled by multiple AI agents can degrade performance for every other customer on the server. The industry’s response has been fragmented but accelerating, and the approaches taken by providers in the US and Europe reveal meaningfully different philosophies about whose problem this is to solve.

Cloudflare: The Industry’s Most Aggressive Response

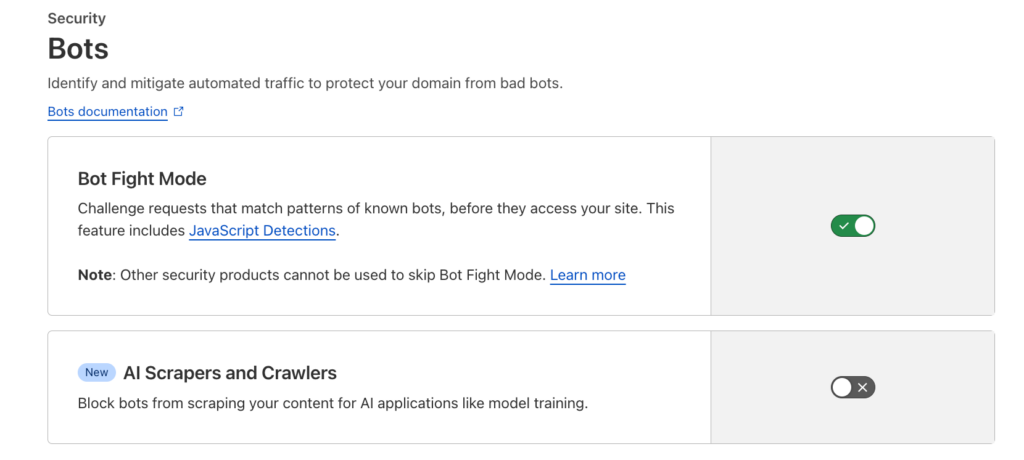

Cloudflare has moved faster and further than any other infrastructure company on AI crawler management – and its approach has evolved rapidly.

The company first introduced its “AI Scrapers and Crawlers” toggle on July 3, 2024, available on all plans including the free tier. A single click in the dashboard (Security > Bots) blocks verified AI crawlers plus unverified bots exhibiting similar scraping behavior. By July 2025, Cloudflare had shifted its default posture: every new domain onboarded to the platform is now prompted to choose whether to allow AI crawlers, with blocking as the default option.

The scale of the problem Cloudflare is addressing explains the urgency. According to the company’s own crawler analysis published in July 2025, GPTBot (OpenAI) surged from 5% to 30% of AI crawler market share between May 2024 and May 2025 – a 305% increase in raw request volume. Meta’s meta-externalagent emerged as a new major presence at 19% share. ByteDance’s Bytespider, once the dominant crawler at 42% share, collapsed to 7%.

But Cloudflare’s most consequential move came in July 2025, when it introduced the concept of pay-per-crawl – a model that lets site owners charge AI companies for access to their content, with a minimum of $0.01 per request. The feature, rebranded as AI Crawl Control and moved to general availability in August 2025, gives site owners three options for each AI crawler: Allow (free access), Charge (require payment), or Block (deny entirely). The pay-per-crawl component remains in private beta as of early 2026, but the concept represents the most commercially ambitious response to the AI crawling problem yet attempted at infrastructure scale.

SiteGround: The Training-Versus-Retrieval Distinction

SiteGround has taken a different and arguably more nuanced approach: blocking AI training crawlers at the server level by default, while allowing user-action crawlers through.

The distinction is important. Training crawlers – GPTBot, ClaudeBot, Bytespider, CCBot, and others – scrape content to build and refine AI models. User-action crawlers – ChatGPT-User, OAI-SearchBot, Claude-SearchBot, PerplexityBot, Google’s Gemini-Deep-Research – fetch content in real time when a human user asks an AI tool a question. SiteGround blocks the former by default and allows the latter, reasoning that training scraping offers site owners no direct benefit, while user-action crawling is effectively a form of referral traffic.

As SiteGround explained in its blog post on the policy: the company blocks crawlers “specifically designed to scrape content for AI model training purposes,” while allowing crawlers “used when real users interact with AI platforms like ChatGPT, Claude, Gemini, or else.” Customers who disagree with either default – wanting to allow a training crawler or block a user-action crawler – can request changes through support or add manual .htaccess rules.

This training-versus-retrieval framework is the most sophisticated approach any hosting company has taken. It acknowledges that not all AI crawling is equivalent, and that site owners have legitimate reasons to want visibility in AI-powered search results while protecting their content from being absorbed into training datasets. For the broader hosting industry, SiteGround’s distinction is likely a preview of where the conversation will settle: granular control based on crawler intent, not a binary block-or-allow decision.

IONOS: Resource Protection on Shared Infrastructure

IONOS, Germany’s largest hosting provider, has responded to the AI crawler problem with a focus on infrastructure protection rather than content control. According to reporting by SEO Südwest, IONOS returns HTTP 429 (“Too Many Requests”) responses to certain AI crawlers on shared hosting packages – specifically targeting training bots like GPTBot and ClaudeBot while allowing user-facing crawlers like OAI-SearchBot and ChatGPT-User through.

The company’s rationale is explicitly operational: AI crawler load “has risen enormously in recent months,” and the uncontrolled traffic was degrading shared server performance. IONOS has acknowledged that this rate-limiting may reduce customers’ visibility in AI-generated search results and has stated it is working on giving customers more control over the policy.

The IONOS approach – rate-limiting rather than hard blocking, applied at the infrastructure level rather than the customer level – reflects a hosting provider’s primary obligation: keeping the platform stable for all customers. It is less elegant than SiteGround’s nuanced framework, but for a shared hosting environment where a single misbehaving crawler can create cascading performance issues, it is a pragmatic response.

WP Engine and Kinsta: Two Ends of the Managed WordPress Spectrum

In the managed WordPress segment, two of the largest providers have taken opposite approaches.

WP Engine handles AI bot mitigation through its Cloudflare-based WAF layer. The company reported mitigating 75 billion bot requests across its platform in 2025 – a number that reflects the combined volume of AI crawlers, traditional scrapers, and other automated traffic. WP Engine also updated its billing to exclude suspected bot traffic from billable metrics. However, the company does not offer a user-facing toggle; bot management is handled automatically at the infrastructure level.

Kinsta has taken the opposite stance: it does not block AI crawlers and has committed to not charging customers for bot-generated bandwidth. Rather than intervening in which bots can access customer sites, Kinsta absorbs the cost of bot traffic and passes the blocking decision to customers, who can use their own Cloudflare accounts or other tools. The company’s CTO has stated that Kinsta is “working internally and with Cloudflare to improve bot filtering” but prioritizes customer choice over platform-level blocking.

The Website Builder Response: Squarespace Leads, Wix Lags

Among website builder platforms, the response has been uneven.

Squarespace offers the cleanest implementation: a dedicated checkbox labeled “Block known artificial intelligence crawlers” in Settings > Crawlers, separate from the search engine crawler toggle. When enabled, it blocks a comprehensive list of over 20 AI agents – including GPTBot, ClaudeBot, Bytespider, Meta-ExternalAgent, and Google-Extended – via robots.txt. The toggle is off by default, giving site owners an explicit opt-in.

Wix provides a robots.txt editor in its SEO settings but no one-click AI blocking toggle. Users must manually add user-agent directives for each crawler they want to block – a process that requires knowing which crawlers exist and how to format the rules. For the average Wix user, this is functionally equivalent to having no feature at all.

The Gap: Who Has Not Acted

Notably absent from the list of providers with built-in AI crawler controls are several of the world’s largest hosting companies. Most of them does not offer any dedicated AI crawler blocking feature as of early 2026. Their customers must rely on manual robots.txt editing, .htaccess rules, WordPress plugins, or external services like Cloudflare.

In the Asian market, the picture is similarly sparse. Japan’s Xserver launched a one-click AI crawler blocking feature on January 7, 2026 – the first such tool from a Japanese hosting company – that blocks 22 known AI crawlers at the domain level. The feature is off by default and available free on all plans. Beyond Xserver, no other Asian hosting company – including major providers – has shipped a comparable product.

The WordPress Plugin Ecosystem

Where hosting providers have not acted, the WordPress plugin ecosystem has stepped in. Several plugins now offer AI crawler management:

- Block AI Crawlers – automatically updates robots.txt and adds meta tags on activation. No configuration required.

- Bot Traffic Shield – real-time user-agent blocking combined with robots.txt rules, with a curated and regularly updated blocklist.

- Known Agents (formerly Dark Visitors) – continuously updated crawler database with analytics showing which AI agents are visiting and how often. Also available as a standalone service with integrations for Cloudflare, AWS, Fastly, and Shopify.

These plugins fill a real gap, but they also highlight a limitation: robots.txt is a voluntary protocol. A well-behaved crawler like GPTBot respects robots.txt directives. A less scrupulous scraper does not. Server-level blocking – the approach taken by SiteGround, IONOS, and Xserver – is more effective precisely because it does not rely on the crawler’s cooperation.

China: A Different Problem Entirely

The AI crawler blocking conversation in Western markets assumes a shared technical and legal framework: site owners publish robots.txt directives, well-behaved crawlers respect them, and the debate centers on whether blocking should be opt-in or opt-out. In China, the dynamics are fundamentally different – shaped by the Great Firewall, a distinct regulatory environment, and the fact that the most controversial AI crawler in the world is Chinese.

The Great Firewall as an inadvertent shield. China’s internet infrastructure blocks major Western AI services – ChatGPT has been inaccessible since March 2023, with Claude and Gemini similarly blocked. While this blocking is primarily inbound (preventing Chinese users from accessing foreign AI), it also creates significant barriers for outbound crawling by Western bots. GPTBot, ClaudeBot, and other Western AI crawlers likely face substantial difficulty reaching Chinese-hosted content. Research published in the Journal of Cybersecurity (Oxford Academic) has documented that over half of China’s 13,508 government websites are inaccessible from abroad, with roughly 10% showing deliberate server-side or DNS-based blocking – a “reverse Great Firewall” that, while not specifically targeting AI crawlers, limits foreign data collection from Chinese infrastructure.

No Chinese host offers a dedicated AI crawler blocking tool. Despite the global trend, no Chinese hosting provider – including Alibaba Cloud, Tencent Cloud, and Huawei Cloud – has shipped a one-click AI crawler blocker comparable to Cloudflare’s toggle or Xserver’s feature. The major cloud providers offer general-purpose WAF and bot management modules that can be configured to block AI crawlers – Alibaba Cloud’s WAF 3.0 includes a bot management module with crawler threat intelligence, and Tencent Cloud’s WAF provides rule-based and AI-powered bot identification – but these require manual configuration and technical expertise. For the typical shared hosting customer, the tools are effectively inaccessible.

The Bytespider problem. The irony is that China produced the most aggressive AI crawler the web has ever seen. ByteDance’s Bytespider has been documented scraping at approximately 25 times the speed of GPTBot and 3,000 times the speed of ClaudeBot. Chinese developer communities have reported sites receiving 460,000 requests in a single morning, consuming over 7 GB of traffic – essentially an unintentional DDoS attack. Criticism of Bytespider has been widespread in Chinese developer communities since the crawler first appeared in server logs in 2023. ByteDance’s response – that “online reports are inaccurate” and that webmasters should contact them via email – has done little to address the issue. On Cloudflare’s network, Bytespider’s share of AI crawler traffic collapsed from 42% to 7% between May 2024 and May 2025, likely reflecting both increased blocking by site owners and changes in ByteDance’s crawling strategy.

Regulation is ahead of tooling. China’s legal framework already addresses the problem from a different angle. The Interim Measures for the Management of Generative AI Services, effective since August 2023, require AI providers to use “data from lawful sources” for training and prohibit infringing on intellectual property rights. Chinese courts have begun ruling on AI-related intellectual property disputes, and the robots.txt protocol has been recognized by Beijing’s courts as “an accepted commercial ethic in the internet search industry” in the Baidu v. Qihoo 360 case. The regulatory pressure is real – but the hosting-level tooling to help site owners enforce their rights has not caught up.

One notable gap: Baidu, which operates China’s dominant search engine and its own large language model (ERNIE), has not published a separate AI training crawler user-agent equivalent to Google’s Google-Extended. Site owners who want to allow Baidu search indexing while blocking Baidu AI training currently have no mechanism to make that distinction – a problem that Google solved by splitting its crawlers and that Baidu has not yet addressed.

Comparing the Approaches

| Provider | Region | Blocking Method | Default State | User Control |

| Cloudflare | US | Network-level + pay-per-crawl (beta) | On (new domains prompted) | Per-crawler policies |

| SiteGround | EU/US | Server-level, training bots only | On | Via support / .htaccess |

| IONOS | Germany | HTTP 429 rate-limiting, selected bots | On (shared hosting) | Limited |

| WP Engine | US | Cloudflare WAF | Automated | None (platform-managed) |

| Squarespace | US | robots.txt toggle | Off (opt-in) | One-click toggle |

| Xserver | Japan | Server-level, 22 crawlers | Off (opt-in) | One-click toggle |

| Kinsta | US | None (absorbs bot bandwidth) | No blocking | Customer’s responsibility |

What This Means for Hosting Businesses

The fragmented response across the industry creates both a competitive opening and a strategic decision point for hosting providers that have not yet acted.

AI crawler management is becoming a baseline hosting feature. When Cloudflare, SiteGround, and IONOS have all implemented some form of AI crawler control – and Squarespace has given site owners a dedicated toggle – the absence of such a feature from a hosting control panel begins to look like a gap rather than a neutral default. Customers are increasingly aware that AI bots are consuming their resources and scraping their content. A hosting provider that offers visible control over this earns trust; one that ignores the issue looks negligent by comparison.

The training-versus-retrieval distinction is the right framework. SiteGround’s approach of blocking training crawlers while allowing user-action crawlers reflects a genuine tension that hosting providers need to help their customers navigate. Blocking GPTBot’s training crawler protects content from being absorbed into a model. Blocking ChatGPT-User prevents a site from appearing when someone asks ChatGPT a question – which is increasingly a source of referral traffic. These are different decisions with different consequences, and hosting providers that can surface this distinction in their control panels are adding real value.

The resource argument is the strongest business case. Copyright concerns motivate content publishers. Privacy concerns motivate regulators. But for hosting companies, the most compelling argument for AI crawler management is operational: uncontrolled AI crawling degrades shared infrastructure, increases bandwidth costs, and creates support tickets. When Read the Docs – a documentation hosting platform – implemented AI crawler blocking in 2024, its bandwidth for downloaded files dropped from approximately 800 GB per day to 200 GB per day. That is the scale of resource consumption that AI crawlers can impose on hosting infrastructure – and that hosting providers are currently absorbing without compensation.

Cloudflare’s pay-per-crawl model deserves close attention. If the model scales beyond private beta, it introduces the possibility that AI crawler traffic becomes a revenue source rather than a cost center. For hosting companies, the implications are significant: infrastructure that is positioned to meter and monetize AI access – rather than simply blocking or absorbing it – could unlock an entirely new billing model. It is too early to predict adoption, but the concept deserves attention from any hosting business thinking about where margins will come from in a market where commodity compute is racing to the bottom.

Łukasz Nowak

Nearly two decades in IT. A decade in web hosting - and still in the trenches. Writing about the infrastructure that runs the internet from the inside.

Sources

- Cloudflare - Declaring Your AIndependence: Block AI Bots With a Single Click (July 2024)

- Cloudflare - Trapping Misbehaving Bots in an AI Labyrinth (March 2025)

- Cloudflare - From Googlebot to GPTBot: Who's Crawling Your Site in 2025 (July 2025)

- Cloudflare - Introducing Pay-Per-Crawl (July 2025)

- Cloudflare - Introducing AI Crawl Control (August 2025)

- SiteGround - How We Handle AI Crawlers on Our Servers

- SiteGround - AI Bot Crawling: What It Is and How We Handle It

- SEO Südwest - IONOS blockiert Zugriffe mancher KI-Bots auf Shared Hosting (August 2025)

- WP Engine - 2025 in Review: 75 Billion Bot Requests Mitigated

- Search Engine Journal - Kinsta Won't Charge for Bot Traffic

- Squarespace - Request That AI Models Exclude Your Site

- Wix - Blocking AI Crawlers From Your Site

- Read the Docs - AI Crawlers Are Abusing Our Infrastructure (July 2024)

- Xserver - AIクローラー遮断設定 公式アナウンス (January 2026)

- Tencent Cloud - How to Block AI Company Crawler User-Agents (Chinese)

- Fortune - ByteDance Is Scraping the Web With Its Bytespider Bot (October 2024)

- State Council - Interim Measures for the Management of Generative AI Services (Chinese)

- SCMP - China's 'Reverse Great Firewall' Quietly Blocking Global Access to Official Data