On the evening of May 5, 2026, servers across Germany were fully operational. Hosting providers at Hetzner, Strato, and Ionos were running without incident. The domains were registered, delegated, and pointed correctly. None of it helped. Amazon.de, DHL, Deutsche Telekom, Sparkassen, Bahn.de, Spiegel.de, and Web.de became unreachable for millions of users for close to three hours. Every standard monitoring tool showed green. Support tickets started arriving anyway. The problem was not on any server. It was at the registry level, four layers above the hosting provider, and the company that fixed it first was not DENIC.

A New System, Defective Code, and Three Monitoring Alerts That Were Never Processed

DENIC had deployed a new, third-generation signing infrastructure for the .de zone in April 2026, weeks before the incident. The system combined commercial DNS software, dedicated security hardware, and in-house code. It had been tested and externally audited before deployment. The in-house code contained a defect that the tests did not catch.

The defect surfaced during a scheduled key rotation on May 5. The new system was designed to generate a single cryptographic signing key shared across three pieces of security hardware. Instead, the faulty code generated a different key on each device. Only one of these keys was published as the official verification key. The other two kept signing records regardless. The result: roughly two-thirds of all cryptographic signatures served by DENIC’s nameservers could not be verified by any resolver. DNS resolvers with security validation enabled had no choice but to refuse to serve responses for .de domains and return connection errors.

The failure also reached domains that had not opted into DNSSEC security at all. Security records used to confirm the absence of signing for unsigned domains also became invalid, blocking resolution for a wider set of .de properties than initially reported.

DENIC’s own monitoring detected the anomaly. According to DENIC’s post-incident analysis published May 8, 2026, three validation tools flagged the problem automatically. The alerts were not acted on in time: “the notifications were not processed correctly.” The outage window ran from approximately 19:36 UTC until 22:30 UTC, close to three hours, before resolution was achieved.

Who Lost Access and Who Did Not

The impact was split in a way that made the incident especially difficult to diagnose. Users whose DNS resolver applies cryptographic verification by default were affected immediately. The three major public resolvers that do this are Google’s 8.8.8.8, Cloudflare’s 1.1.1.1, and Quad9’s 9.9.9.9. Anyone using these resolvers received connection errors for affected .de domains throughout the outage. Users still relying on older ISP resolvers that do not apply this verification saw no disruption at all.

Not every .de domain was equally affected. According to ICANN data, approximately 3.6 percent of .de domains carry DNSSEC signing. With 17.9 million .de registrations as of Q1 2026, that is roughly 645,000 domains. DNSSEC adoption is, however, concentrated among high-traffic properties. Downdetector Germany reports during the outage were dominated by complaints about Amazon.de, DHL, and Web.de, confirming that the affected properties included some of Germany’s most visited sites.

UptimeRobot recorded alert volume rising to roughly three to four times normal evening load, peaking at approximately 10,000 alerts per minute. The problem manifested differently depending on which resolver a visitor happened to be using: the same site, the same server, unreachable for one user and fully accessible for another.

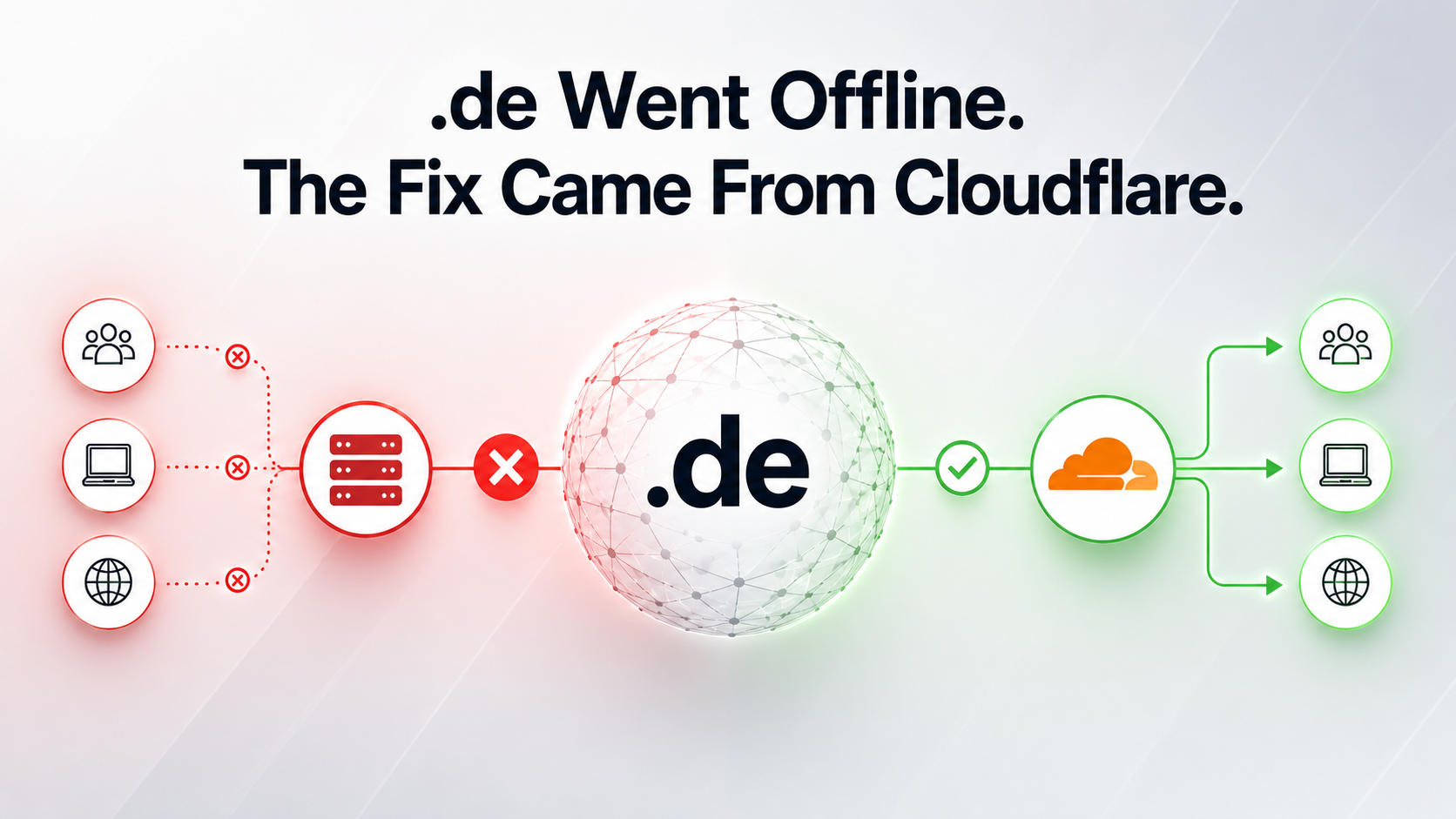

Cloudflare Stepped In While DENIC Was Still Working on a Fix

The outage did not end when DENIC corrected its zone. DNS changes propagate gradually, and cached incorrect responses continue to circulate until they expire. The faster resolution came from resolver operators acting on their own initiative.

At 22:17 UTC, Cloudflare applied a temporary bypass of security verification for the entire .de zone on its 1.1.1.1 resolver, instructing it to serve responses without cryptographic confirmation while DENIC’s zone remained invalid. This restored access immediately for all users of Cloudflare’s resolver. Cloudflare also applied the same adjustment to its internal resolution service used by its CDN customers. Other resolver operators deployed similar measures during the same recovery window.

Cloudflare’s system also provided a partial buffer through a “serve stale” mechanism: for .de domains that had already been cached before the outage, it continued serving those cached responses rather than returning errors. This helped returning visitors but did nothing for users attempting to reach a .de domain for the first time during the outage window.

The combination of DENIC correcting its zone and resolver operators bypassing verification produced the recovery visible in monitoring data around 22:30 UTC. DENIC’s zone was not fully restored until 01:15 UTC on May 6, roughly six hours after the incident began. The previous comparable .de-wide disruption of this scale occurred in 2010.

Green Dashboard, Unreachable Site: The Infrastructure Gap Hosting Providers Cannot Close

The incident exposed a gap that most businesses had not thought to examine. A typical SLA covers server availability, and nothing else. It says nothing about the registry that sits above it, or the resolver network that mediates between that registry and the user. That evening, every such commitment in the .de ecosystem was technically honoured. Servers were up. Platforms were operational. Customers could not connect.

The companies that identified the root cause fastest were those running external DNS resolution checks: monitoring whether a domain actually resolves from a user’s perspective, not whether the server is responding. That distinction is operational. A server health check cannot detect a registry failure. A user-perspective DNS check can. The difference determines how quickly a business moves from “we are investigating” to “here is what happened and here is when it will be fixed.” UptimeRobot’s data showed support ticket volume spiking within minutes of the outage beginning, largely from customers who assumed the problem was with their platform rather than with DENIC.

The sharpest observation from that evening is not about monitoring or SLAs. It is about who actually controls access to the web at scale. The decision that restored Amazon.de, DHL, and Bahn.de for millions of users was made by Cloudflare’s engineering team at 22:17 UTC. Not by DENIC. Not by any of the affected businesses. A private infrastructure company applied a unilateral workaround that bypassed security verification for an entire national domain extension, and that decision determined when users got their access back. For any business that assumed its uptime was a function of its own infrastructure choices, the incident was a precise illustration of how many layers it does not choose and cannot influence.

Sources

- Analysis of the DNS Outage on 5 May 2026 - DENIC Blog (official)

- DENIC Reports Resolved DNSSEC Disruption Affecting .de Domains - DENIC Blog (official)

- DENIC Reports DNSSEC Disruption Affecting .de Domains - DENIC Blog (official)

- When DNSSEC Goes Wrong: How We Responded to the .de TLD Outage - Cloudflare Blog (official)

- Inside the .de DNS Outage: Real-World Data from UptimeRobot - UptimeRobot Blog

- Germany's .de Domain Faces Outage - Domain Name Wire

- Denic Sorry for DNSSEC Error That Crashed Germany's Internet - The Register