A major outage hit Amazon Web Services in the United Arab Emirates after “objects” struck a data centre building, causing sparks and a fire. Local emergency crews cut power to the facility as they worked to put out the fire. What started as a problem in one Availability Zone later spread into a wider regional failure, with two zones impaired and the company warning recovery would take at least a day.

Amazon did not say what the “objects” were. When asked if the incident was linked to the regional missile and drone strikes reported the same day, the company did not confirm or deny any connection, according to Reuters.

By early Monday, AWS said the outage had grown from a single-zone power shutdown into a situation where two Availability Zones were impaired. AWS warned that even management tools were affected: customers saw elevated errors for the web console and command-line access, and the company advised customers to use disaster recovery plans and restore from backups into other regions.

Problems were not limited to the UAE. AWS also reported a separate “localized power issue” affecting one Availability Zone in the Middle East (Bahrain) region, with added knock-on issues like delays pushing some DNS changes to local points of presence.

The story matters beyond one company because the UAE has become a key location for international cloud and data centre investment.

Timeline of the outage

AWS’s public updates are time-stamped in US Pacific time (PST) in the provided incident logs. The event itself is described as beginning around 4:30 AM PST on March 1 (which is 12:30 UTC, and 4:30 PM in the UAE).

Early updates describe a localized power issue affecting a single UAE Availability Zone, with AWS shifting traffic away from the broken zone where possible. The same log shows that customers quickly hit errors on key networking APIs used for basic operations like allocating and associating IP addresses, even outside the failed zone.

Later on March 1, AWS explained the physical trigger: “objects” struck the data centre, sparks and a fire followed, and the fire department cut power to both the facility and its generators during the response. AWS said it was waiting for permission to turn power back on and warned that restoring connectivity would take hours.

By the afternoon and evening, AWS reported partial recoveries in the control plane (for example, improvements for the AllocateAddress and AssociateAddress API paths) and deployed changes that let customers move Elastic IP addresses away from dead resources. Those fixes mattered because customers could not always “detach and reattach” network identity while the broken zone had no power.

A major turning point came late March 1, when AWS confirmed that a second UAE Availability Zone was also hit by a localized power issue. The log states that at that stage it became impossible to launch new instances in the region, and regional services like S3 and DynamoDB showed significant error rates and latency.

On March 2, AWS warned that recovery for the two impaired UAE zones would take at least a day, citing repair of facilities and cooling and power systems, coordination with local authorities, and safety checks for staff. AWS also told customers to enact disaster recovery plans and restore from remote backups into other regions.

In parallel, AWS posted a separate incident thread for the Bahrain region, again warning that recovery could take at least a day for the affected zone and advising customers to restore from snapshots or rebuild in other zones or regions if they needed fast results.

Scope of the outage and who felt it

The outage is especially severe because it moved beyond “one data centre building down.” With two zones impaired, AWS said core regional services and management access became unreliable: customers saw high failure rates for S3 ingest and egress, significant errors for DynamoDB, and elevated errors affecting both the AWS Management Console and command-line tools.

A key point in AWS’s own messaging is that applications built to run across multiple Availability Zones can keep serving users when one zone fails. AWS repeated that customers running redundantly across Availability Zones were not impacted by the initial single-zone event.

But the documents also show why many customers still struggled even if they had “some redundancy.” Regional control-plane problems (API errors across the region) made it harder to launch replacements, move addresses, or scale up in the healthy zone. AWS even warned that launching new instances became impossible across the whole UAE region once the second zone was impaired.

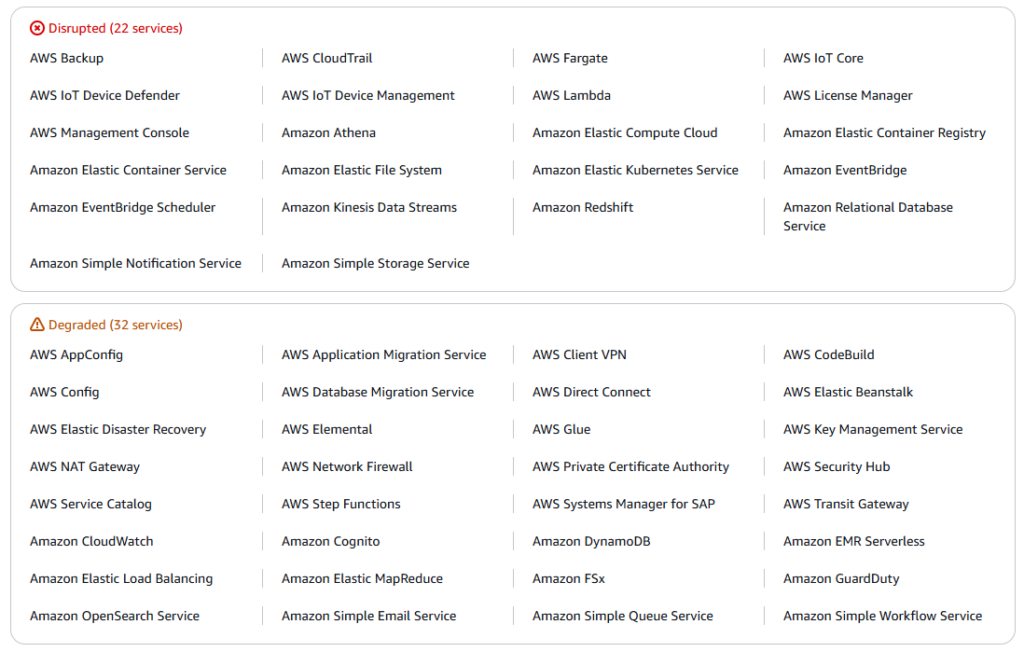

In one captured status snapshot, 19 services were marked disrupted, spanning serverless (Lambda), containers (ECS/EKS/Fargate), data services (S3/Redshift/Athena/Kinesis), and the management console itself. This is a sign of broad, cross-service impact rather than a narrow outage in a single product.

The documents also describe “pressure wave” effects: as customers tried to move workloads into the remaining healthy zones, AWS warned of longer provisioning times and harder-to-get instance types due to increased demand. That shortage risk is common during large regional incidents because many clients try to fail over at once.

Beyond technology firms, Abu Dhabi Commercial Bank publicly said its platforms and mobile app were unavailable because of a wider regional IT disruption, though it did not directly blame AWS.

Factors that turned one facility incident into a regional crisis

The first factor was physical: a strike and fire response can force a “hard power off,” including backup systems. AWS’s log states that the fire department shut off power to the facility and generators during the response, and AWS was waiting for permission to restore power. That kind of shutdown can leave resources unavailable until facilities are safe to re-energize.

The second factor is the way AWS is built. An AWS Region is a cluster of data centres in a geographic area, split into multiple Availability Zones; each zone is one or more discrete data centres with independent power and networking. The UAE region (me-central-1) has three Availability Zones, and the Bahrain region (me-south-1) also has three. When one zone fails, the design goal is that customers can keep running in other zones — but only if they planned for it.

The third factor was the “second hit.” AWS explicitly said S3 is designed to withstand the loss of a single Availability Zone while maintaining durability and availability, and AWS documentation also states that S3 stores data redundantly across a minimum of three Availability Zones by default. In this incident, AWS said S3 stayed normal after the first zone lost power, but error rates climbed when a second Availability Zone became impaired, leading to high failure rates for ingest and egress. In plain terms: S3 is built to survive one zone going away, but two zones breaking at the same time can still create major availability problems.

The fourth factor was “control plane trouble.” The documents show that even customers outside the dead zone faced problems because key EC2 networking APIs failed across the broader region for hours. When those APIs break, basic recovery steps can stall: moving or reassigning IP addresses, launching replacements, and updating network settings may not work until AWS restores or patches the control plane.

The fifth factor was crowding in the surviving zones. AWS warned customers to expect longer provisioning times and to retry or choose other instance types because of demand surges in the unaffected zones. For hosting providers and large SaaS firms, this can turn a “simple failover” into a longer outage if capacity is not available at the time everyone needs it.

The sixth factor was regional instability and uncertainty. AWS has not identified the “objects,” and it did not confirm a link to the regional strikes. Still, multiple news outlets reported the timing alongside a wider conflict and noted the new risk that data centres could become part of a conflict’s target list.

Kamil Kołosowski

Author of this post.